Immune-mediated diseases such as allergies, asthma, and autoimmune disorders have increased alongside urbanization. A suspected cause is an overly clean environment, where contact with nature and its microbes is lost. Adjunct Professor Olli Laitinen researches the health effects of microbial exposure at Tampere University. He also serves as Chief Research Officer at Uute Scientific Ltd, a company that produces an extract containing inactivated microbes.

Olli Laitinen’s research group at Tampere University and Uute Scientific have utilized the computing and sensitive data services of Finland’s ELIXIR node at CSC – IT Center for Science in their studies. Over the course of more than ten years, samples have been collected from over 500 individuals, including infants, preschoolers, and adults. Part of the data has been stored in CSC’s secure environment.

Laitinen emphasizes that the root cause of today’s immune-mediated diseases is the loss of contact with nature and living in an overly sanitized environment.

For example, atopic dermatitis is a common, partly hereditary disease that affects approximately 20–30% of the Finnish population. Its symptoms include itching, dryness, roughness, redness, and skin lesions. The underlying cause is abnormal immune system function.

“In the Nordic countries, atopic dermatitis is prevalent. It has been observed that many immune-mediated diseases become more common at the population level as one moves northward.”

The PREVALL project, led by the universities of Tampere and Helsinki, has studied the impact of plant- and soil-based materials on the development of allergies in children. The project has also investigated whether the onset of atopic dermatitis in infants could be prevented.

Read more here:

Immune-mediated diseases, such as allergies, asthma and autoimmune diseases, have increased with urbanisation. Researchers suggest that the reason for this is the excessive cleanliness of our environment, as a result of which we have lost touch with nature and its microbes. Adjunct Professor Olli Laitinen studies the health effects of microbial exposure at Tampere University. He also serves as Chief Science Officer at Uute Scientific Ltd. The company produces an extract containing inactivated microbes, which can be used as a raw material in cosmetics, for example.

Microbial exposure can be easily increased by spending time in nature and coming into contact with soil. Handling natural materials with a wide range of microbes changes the microbiota of the body. Laitinen’s projects explore solutions suitable for urban areas to influence the prevalence of immune disorders by modifying the green environment and consumer products.

Laitinen believes that microbial exposure starts at birth.

“When we are born, our bodies are not immunologically complete. At the moment of birth, we encounter practically millions of different life forms. That’s when our immune system starts to learn what is dangerous and what is harmless.”

Laitinen stresses that the root cause of today’s immune-mediated diseases is the fact that we have lost touch with nature and live in an environment that is too clean.

“Humans have lived in natural conditions for hundreds of thousands of years. Mothers have given birth squatting on animal hides, and babies have been wrapped in plant materials. From our first moments, we have been in contact with the soil and nature. We adapted to this exposure.”

“At birth, our bodies get a full blast of microbes and our immune system gets to work. At that point, it is important for the immune system to be able to distinguish between what is dangerous and what is harmless. What is harmless is, of course, our own bodies. However, the immune system also needs to recognise that not all external exposure is dangerous either. Therefore, it is not wise to develop allergies to animal dander, for example. The immune system must learn which microbes are genuinely dangerous and pathogenic.”

Childbirth in a hospital is quite sterile compared to nature.

“If you’re in an environment where the immune system doesn’t get a lot of learning material, the system starts act so that anything external is potentially dangerous. This leads to allergies and asthma, atopic dermatitis, or worse: a situation where the immune system cannot distinguish between the body’s own cells and pathogens, so it starts destroying the former, leading to various autoimmune diseases.”

Promising results have now emerged on how a biodiverse environment can prevent the development of autoimmune diseases, such as type 1 diabetes. Laitinen refers to a part of Noora Nurminen’s doctoral thesis at Tampere University, where she studied the amount of green environment and its impact on the development of type 1 diabetes.

“Type 1 diabetes occurs when inflammatory cells in the immune system are activated in the pancreas and destroy insulin-producing cells. Nurminen examined a cohort of more than 10,000 children to learn how the growing environment during the first year of life influenced the development of diabetes. The results showed that an agrarian environment was healthy for children. Children living in rural areas did not develop diabetes or the autoimmune process leading to it as often as children living in urban areas, or their disease process started much later than that of children living in urban areas.”

The diversity of the microbiota in the human body has declined considerably, especially in the Western world. According to one estimate, urbanised people have 60% of their original microbiota remaining on the skin, and only 50% in the gut. In some areas of the United States, the microbial loss is even higher. As it happens, citizens of the U.S. have more inflammatory diseases than citizens of other countries.

Olli Laitinen believes that the immune system of newborns should start receiving training in the form of exposure to nature when leaving the maternity ward at the latest. Without exposure to nature and its microbes, our bodies’ immune defences cannot function properly. The overreacting immune system may lead to diseases. For example, in the case of allergy, the body misinterprets pollen as a virus.

“We base our research on the function of the immune system and the disruption caused by lack of exposure to nature. The natural role of immunoglobulin E has been to fight parasitic infections, but now that there are far fewer of them, IgE is a free agent looking for new tasks. Such as reacting to pollen.”

Immunoglobulins, or antibodies, are proteins produced by the cells of the body’s defence system. The role of antibodies is to help the defence system destroy invaders, such as bacteria and viruses. IgE-type antibodies have been deprived of their natural activity due to excessive hygiene and sterility, and are therefore inactive. Now, the IgE response is incorrectly activated e.g. against the proteins in pollen, causing allergic hypersensitivity reactions.

Immunoglobulin E is found in allergies and allergic diseases. In allergies, the body produces it to fight off things like pollen or certain foods. The antibody attaches to cells in the skin and mucous membranes and releases histamine. This is what makes us sneeze, our breathing to become obstructed and our eyes swell shut. In developing countries, where parasitic infections are more common, IgE often occurs at high levels without any allergic symptoms.

The “false enemies” of immunoglobulins are a great example of biodiversity loss, which also applies to the microbiota.

“Now that we’re being sold a lot of antibacterial substances, we are actually cleaning away all the bacteria. This is not desirable. It would be better to have a well-established microbiota around us, because that helps us avoid sudden, major changes.”

Changes in the microbiota can cause antibiotic resistance, which is a big problem. Antibiotic-resistant bacteria carry resistance genes and often become dominant in microbial populations.

“Pathogens are usually fast-growing microbes. The abundance of pathogens increases the exchange of genes between them, strengthening their resistance to antibiotics,” says Laitinen, who has also studied antibiotic resistance.

“Hopefully, in the future we will have a safe amount of diverse microbes in our environment so that antibiotic-resistant bacteria cannot thrive.”

Atopic dermatitis is a common, partly hereditary disease affecting about 20–30% of the population in Finland. Its symptoms include itchiness, dryness, roughness, redness and breakouts of the skin. This is due to abnormal immune system function.

“In the Nordic countries, atopic dermatitis is common. It has been observed that many immune-mediated diseases become more prevalent at the population level as we move northwards.”

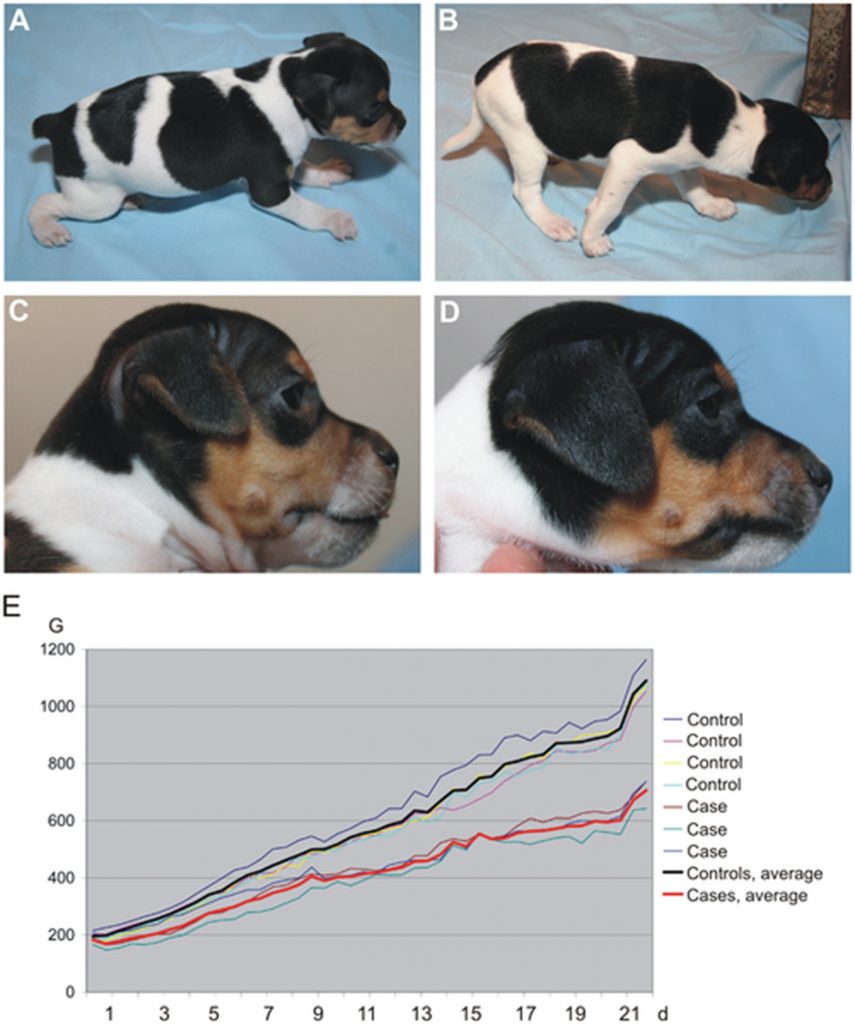

The PREVALL project, led by the Universities of Tampere and Helsinki, has studied the impact of plant- and soil-based materials on children’s allergies. The project has examined whether it would be possible to prevent the development of atopic dermatitis in babies. Children with both parents diagnosed with atopic dermatitis were included in the study.

“In that case, the child has about a 40% risk of developing the same disease,” Laitinen points out.

In Johanna Kalmari’s and Iida Mäkelä‘s study, a joint project between Uute Scientific and Tampere University, people suffering from atopic dermatitis were given microbial cream containing the extract developed by Uute Scientific. The microbes were not alive, but the cream contained microbial components to which the body and immune defence system can react. In other words, exposure to nature was administered through a cream. The subjects started using the cream in late summer and autumn, as atopic skin gets worse in winter due to dry air and lower temperatures. The lower amount of natural light also has an impact. The subjects used the cream at least three times a week. The researchers took various samples from the subjects, examining the water permeability and redness of the skin, which are indicators of inflammation.

“The biggest difference was seen in the use of medication. The group that used the microbial extract containing cream needed significantly less medication during the trial period of 7 months. The microbial extract containing cream was able to prevent skin deterioration. The cream is a so-called “nature exposure remedy”. It’s a supportive form of treatment that allows patients to use less medication.”

The newest frontier is no more and no less than space itself.

“Astronauts suffer from various skin problems. Not surprisingly, the International Space Station has a very poor microbial environment. Our extract could be taken into space. Discussions have been held with the European Space Agency (ESA) on the use of the cream.”

Olli Laitinen’s research group at Tampere University and Uute Scientific have used the computing and sensitive data services of the CSC, the ELIXIR Node of Finland in their research. Over the course of more than 10 years, the group has been involved in sampling more than 500 individuals, including infants, kindergarten-age children and adults. Some of the data is stored in CSC’s data security environment.

Uute Scientific’s microbial extract is made by combining various plant composts. It contains inactive microbes, which means they are harmless. However, the immune system recognises microbes, microbial particles and also destroyed pathogens. The material was originally developed at the Universities of Helsinki and Tampere. Its biodiversity makes it a unique raw material for cosmetics and other consumer products worldwide. It contains at least 600 different species of microbes.

Ari Turunen

23.6.2025

Read article in PDF

More information:

University of Tampere

https://www.tuni.fi/en

Uute Scientific

https://www.uutescientific.com/fi/

CSC – IT Center for Science

is a non-profit, state-owned company administered by the Ministry of Education and Culture. CSC maintains and develops the state-owned, centra- lised IT infrastructure.

https://research.csc.fi/cloud-computing

ELIXIR

builds infrastructure in support of the biological sector. It brings together the leading organisations of 21 Euro- pean countries and the EMBL European Molecular Biology Laboratory to form a common infrastructure for biological information. CSC – IT Center for Science is the Finnish centre within this infrastructure.

The ELIXIR Core Data Resources (CDRs) have been selected based on their quality, wide usage, and long-term significance. They are essential to many fields of research, including genomics, proteomics, and drug development. ELIXIR Core Data Resources provide researchers with open and reliable access to biological datasets, promoting new discoveries and accelerating, for example, the development of new drugs, the understanding of diseases, and the identification of biomarkers.

The data analysis services and machine learning models provided by the ELIXIR infrastructure can help identify new drug candidates from large datasets. These resources and databases allow natural compounds to be analysed more quickly and accurately, supporting their development into safe and effective pharmaceuticals.

These are for example ENA, ChEBI and Ensembl. ENA (European Nucleotide Archive) is a database maintained by the European Bioinformatics Institute (EMBL-EBI) that stores and shares sequencing data from various organisms, including microbes, plants, animals, and humans.

ChEBI (Chemical Entities of Biological Interest) is a curated biochemical database that contains information about biologically relevant small-molecule compounds. Ensembl is a genomics and bioinformatics database that provides analysed genomic data from a range of organisms, including humans, animals, plants, and microbes.

The database can be used to look up the biological effects of compound and its target molecules as well as genetic and protein structure data and related genes, aiding research into drug resistance and the effects of mutations.

Read more here:

Tree bark acts as an important chemical defence mechanism against pests. When a plant comes under threat from bacteria or an insect, alkaloids secreted by the plant may, for example, inhibit cell division or the activity of DNA in the insect, preventing reproduction. This is the operating mechanism of paclitaxel and camptothecin, two compounds isolated from the bark of different trees and developed into effective anticancer drugs. Data analyses and databases have now become available to help identify bioactive compounds in trees and other plants.

There are half a million plants in the world, of which an estimated 7% are used for medicinal purposes. Around 25% of prescription medicines in use today are plant-based. This refers to medicines consisting of natural compounds isolated from plants and synthetic derivatives developed from them. Preserving biodiversity is also of paramount importance for pharmaceuticals, as new plant species are constantly being discovered and the chemical composition of even known plants is largely unknown.

Paclitaxel and camptothecin are examples of anticancer drugs that were discovered when samples from potential medicinal plants were systematically screened. The US National Cancer Institute (NCI) screened more than 35,000 plant samples in a research programme that started in 1956 and continued until 1981. The aim of the programme was to identify plant compounds that could be used to prevent or treat cancers.

The ambitious programme also drew on ethnobotany and history. Programme director Jonathan Hartwell compiled an extensive collection of ancient Chinese, Egyptian, Greek and Roman texts on the medicinal uses of plants. To find the samples and obtain accurate botanical information, Hartwell turned to the U.S. Department of Agriculture (USDA). USDA botanists began collecting plants from around the world to be analysed in laboratories.

Research Triangle Institute’s chemists Monroe E. Wall and Mansukh C. Wani received samples of Camptotheca acuminata for study. Known as the Happy Tree in China, Camptotheca acuminata grows naturally on wet banks of the Yangtze River. In traditional Chinese medicine, its leaves and bark have been used to treat various inflammations and infections.

Wall and Wani discovered that the compounds in C. acuminata were highly active in the L1210 mouse leukaemia cell line, meaning that its effects were seen in cancer cells. The L1210 line is commonly used in cancer research and for testing new anticancer therapeutics. It was isolated from a mouse with lymphocytic leukaemia. Wall and Wani isolated an active compound from wood, which was named camptothecin. It was found to be highly effective against leukaemia cells.

Camptothecin binds to an important cellular enzyme, topoisomerase I, and to DNA complexes. This prevents cancer cells from replicating their DNA, resulting in cell death. Despite its effectiveness, camptothecin has serious side effects and poor solubility. A drug being soluble in water is important because it affects the absorption and distribution of the therapeutic agent in the body. Later, derivatives of camptothecin were developed that were water-soluble and better tolerated and retained their efficacy. These include topotecan and irinotecan. Topotecan (Hycamtin) is used for ovarian, lung and cervical cancer, while irinotecan (Camptosar) is used primarily for colon and rectal cancers.

Synthetic derivatives developed from a natural compound can be significantly more effective than the original compound. In the 1980s, the Japanese company Yakult Honsha developed irinotecan, a derivative of camptothecin. It was then discovered that its active form in the body is its metabolic product 7-ethyl-10-hydroxycamptothecin, which is about 100 to 1,000 times more active than irinotecan itself. The compound was given the name SN-38, which stands for the pharmaceutical company code “SmithKline Number 38”. It is not active as such, but acts as a prodrug. SN-38 is a potent anticancer agent that is produced in the body when irinotecan is converted to its active form. Conversion to SN-38 takes place in the liver and other tissues. It is therefore a modified version of naturally occurring camptothecin with added ethyl and hydroxyl groups. These changes resulted in a highly effective therapeutic agent. Some individuals carry the UGT1A1*28 mutation. A mutation in the UGT1A1 gene (such as UGT1A1*28) may reduce the activity of the enzyme and slow down the elimination of SN-38, thereby increasing its toxicity. This may increase the drug’s side effects. The Ensembl database can be used to study the UGT1A1 gene, its mutations and possible effects on SN-38 metabolism, for example.

Wall and Wani continued to study the plant samples after the discovery of camptothecin. They were asked to analyse samples of Pacific yew (Taxus brevifolia).

The Pacific yew is one of five genera in the Taxaceae family. It is a slow-growing tree native to North America, where it is found in the shade of giant conifers on the banks of streams, in deep ravines and in wet passes. Its wood is hard but of limited use. The tree has few natural pests because most parts of it are poisonous. In 1971, Wall, Wani and their colleagues published a study in which they presented a compound isolated from the bark of the yew tree. It prevents microtubules from breaking down, stopping cancer cells from dividing. The compound was named paclitaxel (Taxol).

Paclitaxel was an effective cancer drug, but there were environmental concerns. The extraction of the compound from the yew tree killed the rare tree. As the natural source (yew tree bark) was not sufficient for large-scale production of the drug, a semi-synthetic method was developed in the 1990s using 10-deacetylbaccatin from the needle of the yew tree as the raw material. The compound (10-DAB) is a precursor to paclitaxel, and by adding benzylamine to it, pure and ecologically sustainable paclitaxel can be produced. Paclitaxel is one of the most commonly used medicines for breast and ovarian cancers.

The ELIXIR Core Data Resources (CDRs) have been selected based on their quality, wide usage, and long-term significance. They are essential to many fields of research, including genomics, proteomics, and drug development. ELIXIR Core Data Resources provide researchers with open and reliable access to biological datasets, promoting new discoveries and accelerating, for example, the development of new drugs, the understanding of diseases, and the identification of biomarkers.

The data analysis services and machine learning models provided by the ELIXIR infrastructure can help identify new drug candidates from large datasets. These resources and databases allow natural compounds to be analysed more quickly and accurately, supporting their development into safe and effective pharmaceuticals.

ENA (European Nucleotide Archive) is a database maintained by the European Bioinformatics Institute (EMBL-EBI) that stores and shares sequencing data from various organisms, including microbes, plants, animals, and humans.

Since ENA contains genomic and sequencing data from all forms of life, it is a key resource for biodiversity researchers analysing species’ genetic diversity, population genetics, and evolution. It aids in the identification of new species (via DNA barcoding and metagenomics) and the study of relationships between species (through phylogenetic analyses).

The genetic databases included in ENA enable large-scale meta-analyses and comparisons of genetic information across different populations or species. This supports progress in a wide range of research areas such as evolutionary biology, disease research, and medicine. ENA is openly accessible to researchers worldwide.

ChEBI (Chemical Entities of Biological Interest) is a curated biochemical database that contains information about biologically relevant small-molecule compounds. It provides accurate chemical and biological data on compounds such as drugs, metabolites, and natural products.

ChEBI includes precise information on chemical structure, molecular formula, mass, and isomeric details, which helps researchers analyze the chemical properties of pharmaceutical compounds.

Search example: The database can be used to look up the biological effects of paclitaxel and its target molecules.

Ensembl is a genomics and bioinformatics database that provides analysed genomic data from a range of organisms, including humans, animals, plants, and microbes.

Search example: The main molecular target of paclitaxel is the tubulin protein. Ensembl provides genetic and protein structure data on tubulin and related genes, aiding research into drug resistance and the effects of mutations.

Ensembl includes information on genetic variations that may affect the efficacy and side effects of Taxol. For instance, the enzymes CYP3A4 and CYP2C8, which metabolize Taxol, can carry mutations that impact the drug’s effectiveness.

Ari Turunen

8.5.2025

Read article in PDF

More information:

ELIXIR Core Data Resources

https://elixir-europe.org/platforms/data/core-data-resources

CSC – IT Center for Science

is a non-profit, state-owned company administered by the Ministry of Education and Culture. CSC maintains and develops the state-owned, centra- lised IT infrastructure.

https://research.csc.fi/cloud-computing

ELIXIR

builds infrastructure in support of the biological sector. It brings together the leading organisations of 21 Euro- pean countries and the EMBL European Molecular Biology Laboratory to form a common infrastructure for biological information. CSC – IT Center for Science is the Finnish centre within this infrastructure.

Vinca alkaloids are the first known naturally-derived anti-cancer drugs. Vinblastine and vincristine are on the WHO list of essential medicines. The Madagascar periwinkle (Catharanthus roseus) is one of the few plants that have directly produced approved anticancer drugs. The names vinblastine and vincristine are derived from the genus Vinca, to which the Madagascar periwinkle belongs. This flower that grows on the island of Madagascar. has saved thousands of children with lymphatic leukaemia.

“It is fascinating that molecules created by the process of mutual survival between plants and insects can influence human biological processes. In nature, an active chemical structure is no coincidence, but repurposing these rare molecules for new uses such as medicine requires innovation,” says Tommi Nyrönen, director of ELIXIR Node of Finland. Nyrönen has studied medicinal substances.

“Natural compounds that may be toxic to one species can, if properly dosed, help another species, as in the case of vinca alkaloids. What’s exciting is what we don’t yet know, because we don’t yet know all the microbes or plants on Earth yet. Similar discoveries can be made in the future by collecting and analysing molecular-level data from research in living nature.”

Information on vinca alkaloids can be found in many databases. For example, ChEMBL, BioStudies, UniProt and Reactome provide information on pharmacological properties, target proteins (such as tubulin), mechanisms, and cellular effects.

“ELIXIR is a data infrastructure on living nature. The databases are part of ELIXIR’s data repositories, which are freely available for scientific research, education and industry,” says Nyrönen.

Read more here:

The Madagascar periwinkle (Catharanthus roseus) is a beautiful flower that grows on the island of Madagascar. It is one of the most important medicinal plants in the treatment of cancer and has saved thousands of children with lymphatic leukaemia. The Madagascar periwinkle is a great example of why biodiversity needs to be protected. It has grown in isolation on the island and developed genome mutations, creating secondary metabolic products that help the plant to survive in the Madagascar ecosystem. More than 200 alkaloid compounds are found in the Madagascar periwinkle, of which vincristine and vinblastine are used in medicinal treatments. Although new cancer drugs are constantly being developed, vincristine and vinblastine, or vinca alkaloids, are still important in medicine.

The biosynthesis, which is the enzyme-catalysed process in which simple compounds are converted into new compounds, of the Madagascar periwinkle was studied for years. The plant’s leaves have traditionally been used in Madagascar to lower blood sugar levels and control diabetes, as well as to treat infections and wounds. When Canadian researchers Robert Noble and Charles Beer started to investigate how the Madagascar periwinkle lowers blood sugar in the 1950s, they found something else interesting instead.

Noble and Beer gave rats flower extracts orally, but no effect on serum glucose levels was observed. The researchers tried a different approach, giving the rats the extract intravenously in the hope that it would boost the blood sugar lowering effect. This led to unexpected consequences: the rats died from bacterial infections. However, the researchers found that the extracts of the plant had an immunosuppressive effect, meaning a strong effect on white blood cells and bone marrow. This led to the discovery of anti-cancer properties in further research. Noble and Beer kept analysing Madagascar periwinkle substances until they identified the active compound, which they named vincaleukoblastine (vinblastine). Vinblastine interferes with intracellular metabolism and inhibits cell division, which means it is a chemotherapeutic agent.

Charles D. Carmichael and Harold P. S. Harington isolated vincristine from the Madagascar periwinkle in the 1950s. Carmichael and Harington worked for the Canadian Cancer Research Foundation, and their research focused on discovering anti-cancer agents in wild plants. Vincristine was one of the effective substances they found to prevent cancer cells from dividing.

At the same time, Gordon Svoboda and Irving Johnson at Eli Lilly and Company were studying plant samples from around the world in the hope of finding plant extracts that could be used to develop cancer drugs. They attended a conference where the Canadian researchers presented their research.

The researchers found they shared a common interest in the Madagascar periwinkle and started cooperation.

Svoboda and Irving studied the effect of vincristine on microtubule formation and cell division. Microtubules are important for many cellular functions, such as division, transport of materials and maintenance of cell structure. Cell cultures were treated with vincristine, which allowed the researchers to monitor the effects of vincristine under the microscope and assess its effectiveness in preventing cell division.

Vincristine and vinblastine are toxic to insects and herbivores. They are indole alkaloids that inhibit cell division and can paralyse or kill insects and herbivores if they eat the Madagascar periwinkle. In humans, the compounds have a different effect and have been shown to help the body fight cancer cells.

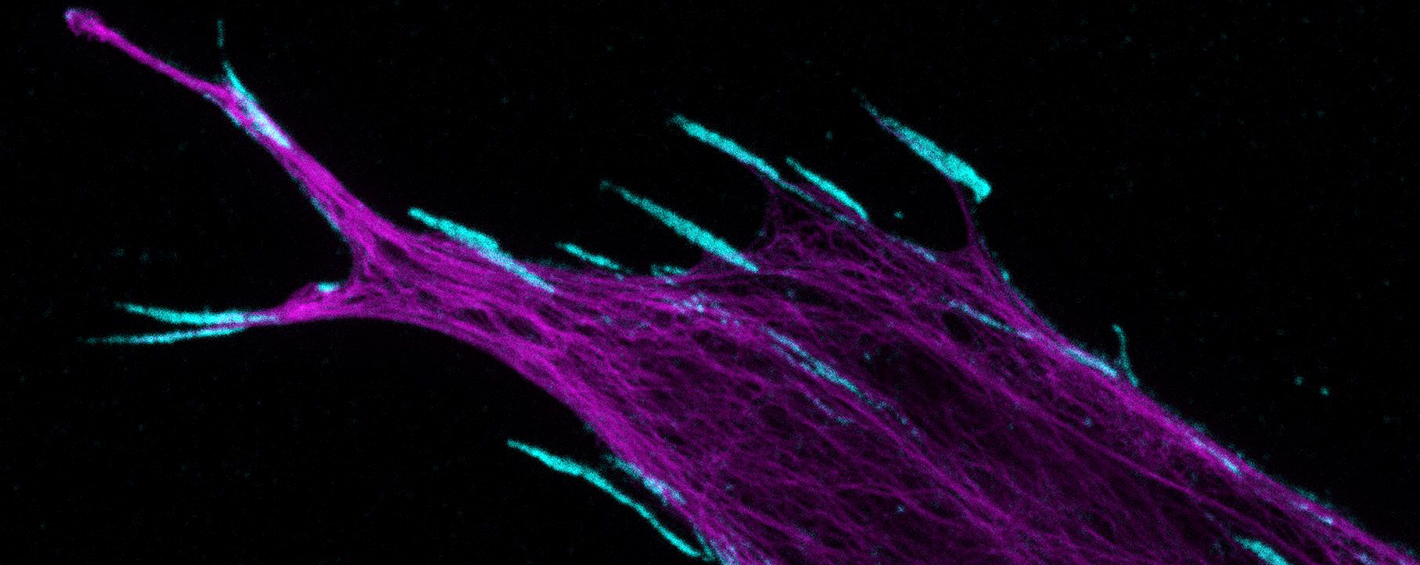

Most plant-based anti-cancer drugs target cell division in one way or another. This makes them effective in treating cancer. Because cancer cells divide uncontrollably, many drugs aim to prevent this process. Vincristine and vinblastine, and paclitaxel from the Pacific yew (Taxus brevifolia), target microtubules that form a part of the cytoskeleton. The cytoskeleton is made up of proteins called tubulin, which form long strands. Vincristine and vinblastine bind ß-tubulins and block the formation of strands, preventing cells from dividing normally. All three substances affect microtubule function, but in different ways. They inhibit the cell from dividing into the metaphase stage. In other words, affecting microtubules prevents tumour growth, if the drug makes the structure of the cancer cells unstable.

Vincristine is typically more effective in treating blood cancers such as acute lymphoblastic leukaemia. Vinblastine, on the other hand, has a better effect on solid tumours. It is used to treat Hodgkin lymphoma, non-Hodgkin lymphoma, breast cancer and testicular cancer.

“It is fascinating that molecules created by the process of mutual survival between plants and insects can influence human biological processes. In nature, an active chemical structure is no coincidence, but repurposing these rare molecules for new uses such as medicine requires innovation,” says Tommi Nyrönen, director of ELIXIR Node of Finland. Nyrönen has studied medicinal substances.

“Natural compounds that may be toxic to one species can, if properly dosed, help another species, as in the case of vinca alkaloids. What’s exciting is what we don’t yet know, because we don’t yet know all the microbes or plants on Earth yet. Similar discoveries can be made in the future by collecting and analysing molecular-level data from research in living nature.”

Information on vinca alkaloids can be found in many databases. For example, ChEMBL, BioStudies, UniProt and Reactome provide information on pharmacological properties, target proteins (such as tubulin), mechanisms, and cellular effects.

“ELIXIR is a data infrastructure on living nature. The databases are part of ELIXIR’s data repositories, which are freely available for scientific research, education and industry,” says Nyrönen.

ChEMBL (Chemical Database) is a chemical database that focuses specifically on the interaction between drugs and their target proteins. It allows for the examination of drugs’ biological effects and pharmacological profiles. The database contains information on drug efficacy, safety, and other biological responses.

Metabolism enables the body to transform active drug compounds into less active or more easily excretable forms. These chemical changes are often facilitated by cytochrome P450 enzymes. Drug metabolism affects how long a drug remains active in the body, how quickly it is eliminated, and how effective it is. If metabolism is slow, the drug may persist longer in the body, whereas rapid metabolism shortens its duration of action. Metabolic pathways can vary between individuals due to genetic factors, environmental influences, and interactions with other medications. As a result, two individuals may have different responses to the same drug.

A bioassay is an experimental method used to measure the potency or effectiveness of a substance, such as a drug, chemical, or natural product, based on its biological response. This is particularly important in drug development as it provides valuable insights into how a substance interacts within the body.

Search: The database allows users to search for specific compounds and their bioassay results, particularly assessing their effects on cytotoxicity or receptor responses. It also provides information on interactions between the queried substance and various drug compounds (drug matrix).

The BioStudies database serves as a central repository for storing descriptions of biological studies. It contains links to datasets stored in other databases, as well as data that do not fit existing structured archives. This allows for the storage of a wide variety of study types in a simple format.

ArrayExpress functioned as a database for functional genomics for over 20 years. In September 2022, its user interface was discontinued, and all data were transferred to BioStudies. This transition enhances data integration and accessibility for the research community.

Search: For example, when studying the effect of vincristine on cancer cell growth, BioStudies may contain experimental setups, analysis methods, and results that aid in interpretation.

A drug, such as vinblastine, can have multiple target proteins that it activates, inhibits, or modifies to achieve its biological effects. Drug target proteins can be involved in various biological processes and cell membranes across different organ systems, with their number varying depending on the drug’s structure and function.

UniProt (Universal Protein Resource) is the world’s leading high-quality, comprehensive, and freely available database of protein sequences and functions, maintained by the UniProt Consortium. It provides extensive and detailed information on protein structure, function, interactions, genetic backgrounds, and diseases.

The database is particularly useful in drug development and understanding drug mechanisms, as it helps map how drugs affect protein function. UniProt contains amino acid sequences of proteins, detailing their structures. It includes evolutionary information and species-specific variations. The database is linked to the Protein Data Bank (PDB), which provides three-dimensional structural insights into protein mechanisms and molecular interactions.

UniProt also provides data on how drugs bind to proteins and alter their function, which helps in understanding how drugs influence protein activity and vice versa. Additionally, it offers insights into the genes encoding these proteins, how gene regulation occurs, and how genetic mutations (e.g., through mutations) can impact protein function and contribute to diseases.

Search: The database can be used to investigate the interactions between tubulin proteins and vincristine and its effects on cell division.

The Reactome database focuses on cellular processes and signalling pathways. It is a manually curated database providing insights into biochemical reactions within cells and organs, including protein, RNA, and biomolecular interactions such as signalling pathways, metabolic pathways, and gene expression.

It also contains information on how disruptions in specific biological reactions can lead to diseases, making it valuable for drug development and biomarker discovery. Reactome offers visual pathway maps that depict molecular interactions within biological pathways. For example, vincristine’s effects can be linked to pathways involved in cell division regulation and apoptosis (programmed cell death).

Search: The database enables researchers to explore how vincristine affects different signalling pathways and its overall impact at the cellular level.

Ari Turunen

27.3.2025

Read article in PDF

More information:

ELIXIR Core Data Resources

https://elixir-europe.org/platforms/data/core-data-resources

CSC – IT Center for Science

is a non-profit, state-owned company administered by the Ministry of Education and Culture. CSC maintains and develops the state-owned, centra- lised IT infrastructure.

https://research.csc.fi/cloud-computing

ELIXIR

builds infrastructure in support of the biological sector. It brings together the leading organisations of 21 Euro- pean countries and the EMBL European Molecular Biology Laboratory to form a common infrastructure for biological information. CSC – IT Center for Science is the Finnish centre within this infrastructure.

A quarter of patients received medications whose efficacy or safety could have been improved by considering the patient’s genome.

Professor Mikko Niemi’s research conducted a nationwide analysis that included all internal medicine and surgical patients in Finnish hospitals, as well as a group of university hospital patients for whom genetic data was available from the THL biobank.

The nationwide cohort included data from 1.4 million people in Finland obtained from THL-managed registries. Two years after hospitalisation, 60 per cent of patients had purchased a prescription medication for which genetic information is relevant.

According to Niemi, genetic information could be highly beneficial in drug treatment.

“Based on current research, many patients could benefit from adjusting their medication based on genetic information.”

If doctors had access to information about patients’ genetics, medication costs and significant adverse effects could often be reduced, and the number of days of sick leave would also decrease.

Niemi’s research group has used the computing services of Finland’s ELIXIR Node at CSC – IT Center for Science to analyse genetic data. Data management has made use of CSC’s sensitive data platform.

In the future, Europeans will have faster and more accurate diagnoses. Collected and analysed genomic data will enable better drug design and preventive treatments.

Niemi sees it as essential that researchers have access to such infrastructure.

“High-quality genomic data storage is crucial for future research. It ensures that new genetic factors influencing drug efficacy and safety can be identified and their impact can be assessed, and that they can ultimately be put into use.”

Read more here:

Professor Mikko Niemi, a pharmacogenetics expert at the University of Helsinki, studies the impact of genes on the effectiveness and safety of medications. In a recently published study, the medications of 1.4 million Finnish patients were analysed, revealing that a quarter of patients received medications whose efficacy or safety could have been improved by considering the patient’s genome. The study used data from registries of the Finnish Institute for Health and Welfare (THL) and biobank data.

People react to medications differently – some experience insufficient effectiveness, while others may have adverse effects. The reason for varying responses may be our physical characteristics, other medication, or genome. If doctors had access to information about patients’ genetics, medication costs and significant adverse effects could often be reduced, and the number of days of sick leave would also decrease.

In the past five years, genetic testing in healthcare has increased.

“There’s now a wealth of research evidence. The key genes influencing drug response have likely been identified. Many of them regulate the amount of a drug in the body. Often, one gene affects many different types of medications,” Niemi says.

In recent years, various gene panels have been developed to analyse multiple genes simultaneously. This can be considered a breakthrough in healthcare. DNA is extracted from the patient’s blood, saliva or tissue. Massive parallel sequencing allows for the targeted study of many genes at once. The panels can be designed to identify genetic variations that may affect, for example, disease risk, drug response or the occurrence of certain hereditary diseases.

Progress in the use of pharmacogenetic laboratory tests occurred in 2020 with the involvement of the European Medicines Agency (EMA).

“At that time, the agency issued a recommendation to test for hereditary DPYD deficiency before initiating fluoropyrimidine-based cancer treatment. This helps prevent serious adverse effects caused by these anticancer drugs. Testing has been a routine since the agency’s recommendation.”

Pharmacogenetic panels typically test between 10 and 20 genes.

“Humans have 20,000 genes. We know well the effects of 10 to 20 genes on drug treatment. These are key to drug response,” says Niemi.

Helsinki University Hospital’s (HUS) pharmacogenetic gene panel covers the 12 most common and clinically significant genes affecting drug treatments. The selection of these genes took into account international guidelines, drug summaries and the prevalence of genetic variations in different populations. Test results are available in Finnish under the title B -PGx-D, in the MyKanta personal health information online service (https://www.kanta.fi/en/mykanta) MyKanta is an online, publicly accessible service where people can access prescriptions, laboratory test results and healthcare records.

“The idea of the panel is that when the suitability of one medication is tested, the patient also has all other relevant genetic factors for many future medications already tested.”

According to Niemi, with the improvement of testing, more drugs are now known to be influenced by genetics. As a result, drug treatment for cancer, for example, has improved. The use of genetic information in psychiatry has also become more common.

”We are starting to have solid research evidence on the benefits of pharmacogenetics in the treatment of depression. Genetic testing has been included in the Current Care Guidelines for the treatment of depression.”

The Current Care Guidelines (Käypä hoito) are expert summaries published by the Finnish Medical Society Duodecim on the diagnosis and effectiveness of treatments for specific diseases.

The required dosage of individual medications can vary dramatically between individuals, sometimes by more than tenfold. This may depend on how quickly or slowly the body eliminates the medication. Cytochrome enzymes (CYP) play a key role in breaking down and eliminating drugs from the body. There is considerable genetic variation in the activity of CYP enzymes, which can lead to vastly different drug concentrations and responses in individuals.

Currently, there is limited knowledge about how beneficial and cost-effective pharmacogenetic tests would be if the genetic background of all hospital patients were known. Niemi’s research conducted a nationwide analysis that included all internal medicine and surgical patients in Finnish hospitals, as well as a group of university hospital patients for whom genetic data was available from the THL biobank. The biobank contains the FINRISKI data, which holds an exceptionally large amount of diverse health data about the Finnish population, including laboratory tests and health registry data.

The nationwide cohort included data from 1.4 million people in Finland obtained from THL-managed registries. Two years after hospitalisation, 60 per cent of patients had purchased a prescription medication for which genetic information is relevant.

“We tracked purchases of medications where we knew genetics influences drug suitability. By analysing genetic variations, we now know for sure that 99 per cent of people in Finland have a clinically significant genetic variant affecting the response to at least one medication.”

The university hospital sample included 1,000 patients, whose genetic information was available from the biobank. Forty per cent of these patients received medications during their hospital stay for which genetic testing could be beneficial. A quarter of them had a gene-drug combination that researchers do not recommend: the medication should be used at a different dosage, or it would be better to choose an entirely different medication.

“Genetic variation is common and affects widely used medications.”

According to Niemi, genetic information could be highly beneficial in drug treatment.

“Based on current research, many patients could benefit from adjusting their medication based on genetic information.”

The benefits are also significant for society. Finland has excellent registry and genomic data management, and is a leader in the use of pharmacogenetic panels.

“In the future, the aim is to assess the economic and health benefits of pharmacogenetic panel testing. The goal is to examine the treatment costs of Finnish patients who have undergone pharmacogenetic testing and compare this to a situation where genetic testing has not been used. For example, if it were possible to identify the ten percent of patients who benefit the most from genetic information, it could lead to savings in healthcare costs, medications, and sick leave.”

Niemi’s research group has used the computing services of Finland’s ELIXIR Node at CSC – IT Center for Science to analyse genetic data. Data management has made use of CSC’s sensitive data platform.

The Genomic Data Infrastructure (GDI) launched in 2022 aims to create a federated infrastructure for researchers which enables an access to European genomic and clinical data. In the future, Europeans will have faster and more accurate diagnoses. Collected and analysed genomic data will enable better drug design and preventive treatments.

Niemi sees it as essential that researchers have access to such infrastructure.

“High-quality genomic data storage is crucial for future research. It ensures that new genetic factors influencing drug efficacy and safety can be identified and their impact can be assessed, and that they can ultimately be put into use.”

GDI will enable retrospective research, including cost benefit analysis on European scale cohorts, as described by Niemi.

“By linking genetic information to disease and treatment information, GDI helps researchers to discover cohorts with specific treatments and genetic variants across Europe, increasing the size of these cohorts and hence supporting the discovery novel genetic effects on medication”, says senior coordinator Dylan Spalding from CSC. Spalding is the co-lead of GDI Work Package 5.

“For clinicians who have a patient who is not responding to medication as expected, GDI will also enable them to find other clinicians across Europe who may have similar patients with different and more effective treatment regimes, and hence improve the treatment of these patients.”

Ari Turunen

6.2.2025

Read article in PDF

Citation

Turunen, A., & Nyrönen, T. (2025). Genetic testing improves medication safety and effectiveness. https://doi.org/10.5281/zenodo.14823385

More information:

Value of Pharmacogenetic Testing Assessed with Real-World Drug Utilization and Genotype Data

Kaisa Litonius, Noora Kulla, Petra Falkenbach, Kati Kristiansson, Katriina Tarkiainen, Liisa Ukkola-Vuoti, Mari Korhonen, Sofia Khan, Johanna Sistonen, Arto Orpana, Mats Lindstedt, Tommi Nyrönen, Markus Perola, Miia Turpeinen, Ville Kytö, Aleksi Tornio, Mikko Niemi

https://ascpt.onlinelibrary.wiley.com/doi/full/10.1002/cpt.3458

DOI: 10.1002/cpt.3458

The research was funded by the Research Council of Finland and the Ministry of Social Affairs and Health. The pharmacogenetics pilot was co-designed and implemented by Kaisa Litonius, Mikko Niemi and Katriina Tarkiainen from University of Helsinki and Helsinki University Hospital (HUS), Noora Kulla, Aleksi Tornio, Kristiina Cajanus and Ville Kytö, from University of Turku and Turku University Hospital, Petra Falkenbach and Miia Turpeinen from University of Oulu, Markus Perola, Kati Kristiansson and Liisa Ukkola-Vuoti from the Finnish Institute for Health and Welfare (THL), Arto Orpana, Mari Korhonen, Johanna Sistonen and Sofia Khan from HUS and Tommi Nyrönen and Mats Lindstedt from CSC.

HUS

CSC – IT Center for Science

is a non-profit, state-owned company administered by the Ministry of Education and Culture. CSC maintains and develops the state-owned, centra- lised IT infrastructure.

https://research.csc.fi/cloud-computing

ELIXIR

builds infrastructure in support of the biological sector. It brings together the leading organisations of 21 Euro- pean countries and the EMBL European Molecular Biology Laboratory to form a common infrastructure for biological information. CSC – IT Center for Science is the Finnish centre within this infrastructure.

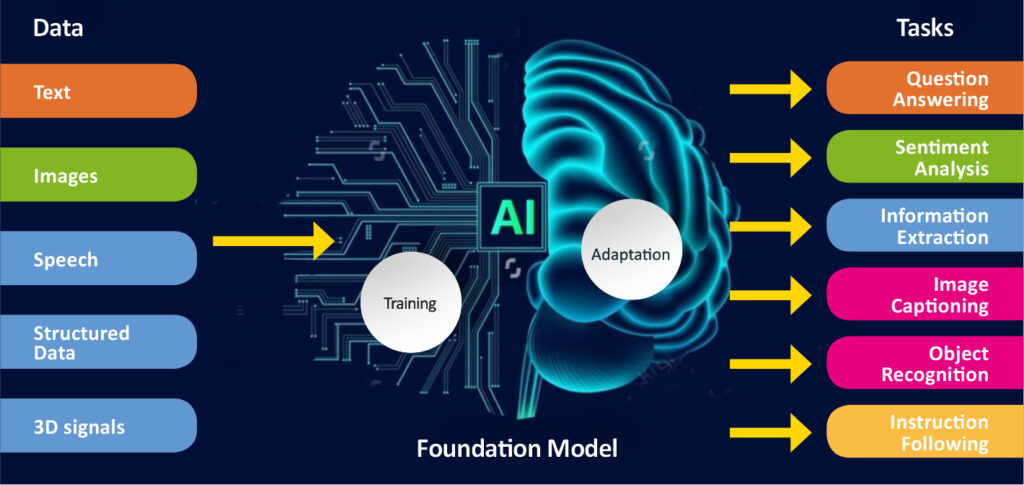

Life science research, such as understanding rare diseases or studying the effects of climate change, requires enormous amounts of data and advanced tools to manage and analyze that data. That’s why ELIXIR Finland was created: to build a unified infrastructure that provides researchers in Finland easy access to these critical resources. Now, ELIXIR Finland celebrates a significant milestone – a decade of advancing life science research in Finland.

Hosted by CSC – IT Center for Science, ELIXIR Finland supports Finnish life science researchers by providing access to essential databases, software tools, training materials, and connecting them to supercomputing resources available across Europe.

ELIXIR Finland’s journey began in 2013, when the state of Finland signed the Consortium Agreement to join ELIXIR, the European infrastructure for life science data. By officially becoming a member in 2014, Finland became the 11th country to participate in the ambitious European initiative and entered the global life sciences and bioinformatics landscape. Today, this membership enables Finnish researchers to collaborate with over 240 research institutes across 22 European countries.

In 10 years, ELIXIR Finland has driven progress and impact in Finnish life sciences e.g. in the following ways:

“The impact of ELIXIR Finland has been intense. In 2014, CSC was working with only tens of computing projects in health and biological sciences. Now, we support over 1,600 research projects with our computing infrastructure. The field is still growing, so in five years’ time we expect to more than double the amount of projects through our European collaborations,” says Tommi Nyrönen, Director of ELIXIR Finland at CSC.

Watch the video of ELIXIR Finland’s ten years:

A game-changer in secure cloud services

From the beginning, ELIXIR Finland has worked closely with the life science community to develop services for their needs. In the early 2000s, cloud services were rapidly evolving. CSC launched its first community cloud service in 2013. It quickly became evident that life science researchers needed a secure cloud service to analyze sensitive human data.

This led to the development of ePouta, a secure cloud service designed with data protection as a top priority. It allows researchers to access resources through a secure network connection, either using dedicated fiber optic links or VPN-like technologies. This added a level of security while making it easier for organizations to use cloud resources, as they were integrated into their own networks.

Looking back, ePouta has been a game-changer, enabling researchers to access cloud resources on their own and ensuring that sensitive data can be securely processed. This development work on security laid the foundation for future customer collaboration, such as development of Findata’s and Statistics Finland’s secure processing environments.

Transforming data access management

Early on, CSC recognized the importance of reliable authorization tools to manage sensitive data. The need for such tools became evident when THL Biobank, a part of Finnish Institute for Health and Welfare, sought a more efficient way to control access to their research data. In response, CSC and ELIXIR Finland developed the Resource Entitlement Management System (REMS), which streamlines data access management and helps make data available for reuse.

REMS is a fully electronic system that handles data access applications, records decisions, and issues machine-readable permissions. Its flexibility and security have made it widely adopted not only in Finland but also internationally, including in Australia. Originally designed to manage research data access, REMS has expanded, now also facilitating tasks like ordering death certificates from Statistics Finland, and integrating software into secure environments.

In line with ELIXIR Finland’s commitment to open standards, REMS supports global data access management standards and is freely available on GitHub under the permissive MIT license. This has enabled quick adaptation to regulations like the Finnish Act on the Secondary Use of Health and Social Data, as well as supporting large-scale international collaborations such as the European 1+ Million Genomes initiative.

Advancing secure data management

CSC’s Sensitive Data Services began as a Nordic project to improve the secure management and exchange of sensitive biomedical data across borders. This collaboration, known as NeIC Tryggve project, led to two important insights: the need for a service to store biomedical data while ensuring it remains within national borders, and the development of a new service, alongside ePouta, to manage sensitive data securely.

The Nordic collaboration quickly demonstrated the potential of securely transferring, storing, and accessing data. The decade’s work was realized in 2019 when video files stored in Finland and Sweden were accessed remotely from Oslo, Norway, using a mobile phone, all within a secure environment on ePouta.

Today, CSC’s Sensitive Data Services have evolved into a trusted platform for secure data management. From early prototypes to the fully operational services available today, ELIXIR Finland’s support and networks have been critical in shaping the vision and capabilities of Sensitive Data Services. One of the most recent developments within this platform is FEGA (Federated EGA), a service developed in collaboration with the ELIXIR network and specifically tailored for sensitive biomedical research data. By leveraging a federated infrastructure, FEGA allows genomic datasets to remain under the control of local institutions while still being accessible for collaborative research.

The ComPatAI consortium focuses on analysing histological samples related to breast and prostate cancer. Using digitalised images allows the researchers to measure and automatically compute different cell types.

One of the key questions is how quickly data can be transferred and used. Computing and data storage capacity are constantly in high demand. This is where the services provided by CSC, the Finnish ELIXIR node, come in.

“We’re extremely pleased with the support CSC has given us, since this is an exceptionally large project that uses very large datasets. We are in a privileged position because we have the support of an organisation like CSC. This is a clear competitive advantage for us, and we really appreciate it, ” says Pekka Ruusuvuori, Associate Professor of the Institute of Biomedicine at the University of Turku.

Read more here:

Pekka Ruusuvuori, Associate Professor of the Institute of Biomedicine at the University of Turku, leads the ComPatAI consortium, which is developing new ways to model histopathological tissue samples with generative and predictive AI. In medicine, histological samples are used to assess a patient’s need for treatment. The consortium’s goal is to use big data to create AI models that would produce more accurate diagnostic information in pathology.

In addition, they are developing virtual histological staining models based on generative AI. Besides Ruusuvuori, the consortium consists of research director and Adjunct Professor Leena Latonen of University of Eastern Finland and Teemu Tolonen, Adjunct Professor and chief physician at the department of pathology at the Fimlab laboratories.

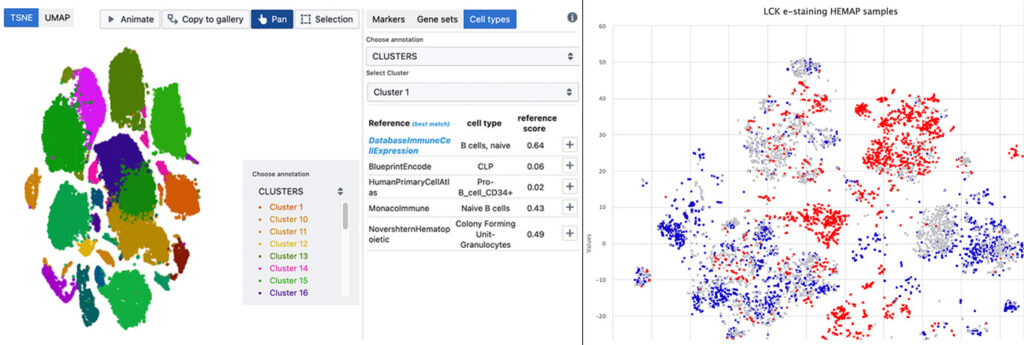

The ComPatAI consortium focuses on analysing histological samples related to breast and prostate cancer. Using digitalised images allows the researchers to measure and automatically compute different cell types.

“Our work has focused mainly on prostate and breast cancer. There is ample data available on these types of cancers, as they are the most commonly encountered cancers in men and women. However, we want to create a very general-purpose model that could then be further refined for different and new applications.”

According to Ruusuvuori, the field of pathology is becoming increasingly digitalised. He says that in this sense, the Finnish pathological community is among the pioneers.

“In Tampere and Turku, we have moved completely to using digital pathology in diagnostics. Each time a sample is taken, it is scanned into a high-resolution digital image. There is a lot of routine diagnostics. As the population ages, we encounter more and more patients with cancer. This also means that there is loads of data coming in.”

The consortium receives scanned images of histological slides from Fimlab, the largest healthcare laboratory company in Finland. Fimlab’s clientele includes hospitals, health centres, occupational healthcare service providers and private medical stations. The Finnish Medicines Agency Fimea has currently granted the consortium a licence for using data from 160,050 cases, which translates to a total of approximately 600,000 slide images. Together, the images add up to about 0.8 petabytes of data, meaning that each file accounts for approximately 1.3 gigabytes. The massive amounts of data are currently being anonymised and transferred to the LUMI supercomputer in the CSC – IT Center for Science, an ELIXIR node in Finland. The project is one of the largest data transfers made to LUMI so far.

“It is incredible that we get to use these data for our research. We want to use this data to create AI solutions that function well in pathological work”, Ruusuvuori explains.

The researchers are currently set to have up to 2.5 million digitised whole-slide images at their disposal by the end of the project. This corresponds to a total of three petabytes of data.

“We have been granted permission to use technically all data produced in Fimlab’s routine digital pathology operations.”

Pekka Ruusuvuori has a strong background in signal processing, and he specialises in image analysis. Ruusuvuori is interested in how deep neural networks that are used in AI applications could be developed to be a better fit for diverse use cases.

According to him, machine can generally be taught to recognise the same things that humans would pick up on. It can learn to tell apart different tissue types or to distinguish cancerous tissues from healthy ones. It can be used to measure different factors in images or cells, such as how aggressive a cancer is and how far it has progressed. Artificial intelligence can identify cancerous areas in tissue samples before examination by a pathologist. It may also suggest a score based on the data it has assessed. For example, prostate cancer tumours are given a Gleason score, which indicates how aggressive or advanced the disease is.

“It’s entirely possible to train AI to perform many tasks that human pathologists usually take care of.”

“Previously machine learning models have been built with a certain variable and teaching material that shows a certain object appearing in a specific part of the image and which score this finding corresponds to. It would take countless hours of work for us to mark this information on all the hundreds of thousands of images we are using.”

This annotation data has previously played a key role in teaching artificial intelligence to automatically detect abnormalities such as cancer cells in the samples. Ruusuvuori says that algorithms have been improved and are consequently able to use unannotated raw data.

“I think the most interesting thing about what we are doing with these algorithms is what else we can extract from these images. In other words, to look at the features that machines can detect but humans cannot. These slide images include all visual data that we have. If there is a statistical link to be found there, the machine learning algorithm will find it. However, these links may be extremely complex. Modern neural networks can accurately detect complex links between spatial data and the predicted variable. These things can be very difficult for us humans to grasp.”

Together with his research group, Ruusuvuori has been able to successfully predict gene expression and mutations directly from histological images. Gene expression refers to the process of a cell producing the molecules that is encoded in its DNA. The gene expression varies across different tissues. Based on the images, AI can detect miniscule changes that are invisible to the human eye.

“Based on the images, the machine can identify effects of gene expression in cells and tissues. It can detect even the slightest phenotype variations, including those that we as humans are not trained to see. I want to highlight that so far, we only have indicative results, and that the method will not work for all tissue types or genes. Some gene expressions do not lead to tissue-level changes that could be predicted from a whole slide image.”

ComPatAI consortium is currently developing a so-called foundation model for utilising large datasets. As the name suggests, this model would create a general-purpose foundation for developing further AI solutions. The model is trained in histology based on a large set of samples, without target variables or annotation data.

“When you start teaching a model like this to recognise diseases such as breast or prostate cancer, it starts to learn to perform the task it has been given. This will allow us to reach more accurate solutions much faster than before. It allows us to use large, unannotated datasets. This is a big step forward for us.”

ComPatAI consortium is currently building its own foundation model based on a Finnish dataset.

“This is basic research that will allow us to be among the first to further refine these models in Finland. I do not want us to rely solely on big foreign firms or research groups, and instead wish that we would be able to be build a model based on Finnish data. We have high-quality, population-level cohort data that need to put to good use. I hope that this will lead to the establishment of companies in Finland that will develop solutions to benefit patients in routine diagnostics.”

One of the key questions is how quickly data can be transferred and used. Computing and data storage capacity are constantly in high demand. This is where the services provided by CSC, the Finnish ELIXIR node, come in.

“We’re extremely pleased with the support CSC has given us, since this is an exceptionally large project that uses very large datasets. We are in a privileged position because we have the support of an organisation like CSC. This is a clear competitive advantage for us, and we really appreciate it.”

Digital pathological data and other potentially sensitive health data types, such as registry and omics datasets, are going to become more readily available through CSC’s data secure user environment.

“We’re just getting started with the development work”, says Tommi Nyrönen, who leads the ELIXIR Finland Node.

“ELIXIR’s node in Finland has helped transform the biomedical resources required by CompPatAI to a platform service operated by CSC. The CSC Sensitive Data platform emerged from this need. However, it continues to serve various other researcher projects in the field. One of these is the EU’s digital pathology archive initiative bigpicture.eu, which is set to launch in 2026. It is a sustainable solution for managing digital pathology datasets and bringing them to high-performance computing services across Europe.”

Ari Turunen

26.12.2024

Read article in PDF

Citation

Turunen, A., & Nyrönen, T. (2024). The ComPatAI consortium uses large datasets to create an AI learning model for pathology. https://doi.org/10.5281/zenodo.14823370

More information:

FIRI

Article was supported by the Research council of Finland under grant number 345591 for ELIXIR European Life-Sciences Infrastructure for Biological Information (FIRI 2021)

Ruusuvuorilab

Fimlab

University of Turku

CSC – IT Center for Science

is a non-profit, state-owned company administered by the Ministry of Education and Culture. CSC maintains and develops the state-owned, centra- lised IT infrastructure.

https://research.csc.fi/cloud-computing

ELIXIR

builds infrastructure in support of the biological sector. It brings together the leading organisations of 21 Euro- pean countries and the EMBL European Molecular Biology Laboratory to form a common infrastructure for biological information. CSC – IT Center for Science is the Finnish centre within this infrastructure.

Associate Professor Mikael Niku from the University of Helsinki wants to determine the kinds of mechanisms the maternal microbes use to modulate the development of the immune system of the offspring.

His research group carries out data analyses using the equipment of the CSC – IT Center for Science, the Finnish ELIXIR node.

The microbes process the substances our bodies absorb, turning them into metabolites.

“In the past, our group – like many other research groups – focused on investigating whether maternal bacteria are transferred from the mother to the foetus. After all, almost no living bacteria is present in a healthy foetus. However, we now know that microbes produce small-molecule metabolites that are then transferred to the foetus.”

Niku is interested in finding out what sort of metabolites are transferred to foetuses and how these metabolites affect the foetal development.

“The metabolites are absorbed from the gut into the bloodstream, from where they’re transferred to placenta and to the foetus. We found that the concentrations of some metabolites are associated to the functioning of genes in the foetus. These genes are often linked to the immune system and its development.”

The research involves analysing microbiomes using amplicon sequencing, targeting the 16S ribosomal RNA (rRNA) gene region. This makes it possible to study the microbiota composition. The 16S gene regions are sequenced and identified through publicly accessible databases.

According to Niku, before long, it can be determined what kind of bacteria and bacterial products the foetus needs to develop an optimal immune system.

“For example, it could allow us to develop probiotic products that contain necessary microbes or microbial products that are currently unavailable.”

Read article here:

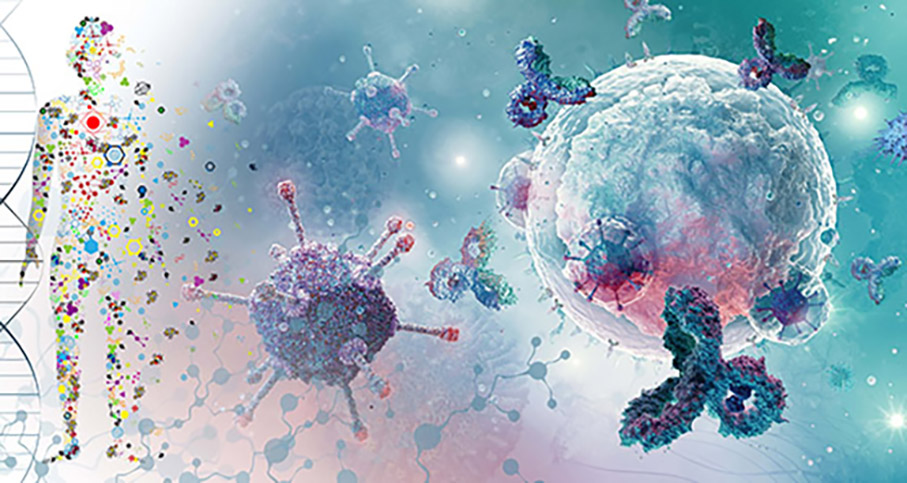

Mikael Niku’s team studies foetuses and how the body’s microbiota develops after birth. Niku is especially interested in how the mother’s microbiota affects the foetal development and immune system in different mammals.

It is a long-established fact that after birth, microbes in the mothers’ body are passed on to the offspring. This prepares the child for life outside the womb.

“The mother’s microbiota also affects immune system development. The immune system learns to accept beneficial gut microbes and to fight pathogens. A diverse microbiota will naturally ward off pathogens,” says Mikael Niku, Associate Professor at the Developmental Interaction Lab at the University of Helsinki.

Niku’s aim is to determine the kinds of mechanisms the maternal microbes use to modulate the development of the immune system of the offspring. His research group carries out data analyses using the equipment of the CSC – IT Center for Science, the Finnish ELIXIR node.

The research involves analysing microbiomes using amplicon sequencing, targeting the 16S ribosomal RNA (rRNA) gene region. This makes it possible to study the microbiota composition. The 16S gene regions are sequenced and identified through publicly accessible databases.

A large proportion of the immune system’s cells are in the intestines. They develop from blood stem cells, taking the form of different types of white blood cells. Diet, lifestyle and the medicines a person takes and the chemicals in their environment all affect the intestinal microbiota. The microbes process the substances our bodies absorb, turning them into metabolites.

“In the past, our group – like many other research groups – focused on investigating whether maternal bacteria are transferred from the mother to the foetus. After all, almost no living bacteria is present in a healthy foetus. However, we now know that microbes produce small-molecule metabolites that are then transferred to the foetus.”

Niku is interested in finding out what sort of metabolites are transferred to foetuses and how these metabolites affect the foetal development.

“The metabolites are absorbed from the gut into the bloodstream, from where they’re transferred to placenta and to the foetus. We found that the concentrations of some metabolites are associated to the functioning of genes in the foetus. These genes are often linked to the immune system and its development.”

One thing that Niku’s research group is currently studying is extracellular vesicles that are produced by bacteria. Vesicles are small, liquid-filled sacs of membrane that are produced by both animal and bacterial cells. They are found in all bodily fluids. Although vesicles were discovered as early as 1946, research on them took off in earnest only in the 2000s. Vesicles contain various cellular products.

“Vesicles may play an important role in things such as recycling materials in the body, cell-to-cell communication, immune regulation and various diseases, among other things.”

Researchers at the University of Oulu were the first team in the world to publish a study that showed that vesicles are transferred from the maternal microbiota to the foetus. They discovered a previously unknown interaction mechanism between the maternal microbiota and the developing foetus.

Niku and his team is further investigating how the foetal immune system develops before birth. Is recognising the good bacteria that the body should not attack something that the foetus’ immune system learns already at this stage?

“It’s possible that vesicles carry bacterial macromolecules, such as proteins, into the foetus, thus training its immune system to discern good bacteria from pathogens. This would allow the offspring to recognise the gut microbes of their mother or their species prior to birth.”

The team’s next focus is on how vesicles present in foetal tissues, how they are able to pass through the placenta and how they affect the foetus.

According to Niku, before long, it can be determined what kind of bacteria and bacterial products the foetus needs to develop an optimal immune system.

“For example, it could allow us to develop probiotic products that contain necessary microbes or microbial products that are currently unavailable.”

Ari Turunen

14.11.2024

Read article in PDF

Citation

Turunen, A., & Nyrönen, T. (2024). Microbiota affects the immune system. https://doi.org/10.5281/zenodo.14823362

More information:

Developmental Interaction Lab at the University of Helsinki.

https://www.helsinki.fi/en/researchgroups/developmental-interactions

CSC – IT Center for Science

is a non-profit, state-owned company administered by the Ministry of Education and Culture. CSC maintains and develops the state-owned, centra- lised IT infrastructure.

https://research.csc.fi/cloud-computing

ELIXIR

builds infrastructure in support of the biological sector. It brings together the leading organisations of 21 Euro- pean countries and the EMBL European Molecular Biology Laboratory to form a common infrastructure for biological information. CSC – IT Center for Science is the Finnish centre within this infrastructure.

Without exposure to nature and its microbes, our immune system dos not function as it should. Mira Grönroos, community ecologist at the University of Helsinki, is interested in how spending time in the forest and connecting with nature affect the skin microbiota. One study increased the interaction between daycare children and natural microbiota. The studies showed, for the first time in the world, that the children’s immune system regulation changed while they were in contact with the diverse microbiota of natural materials.

The microbiota collected from sand, skin and gut was sequenced. The study examined how the microbiota changed between the test group and control group. In the study, the gene region of 16S ribosomal RNA (16S rRNA) was sequenced and the bioinformatics was carried out with the resources of Finnish ELIXIR Node, CSC – IT Center for Science. The 16S gene regions have remained unchanged for millions of years of bacterial evolution, which is why they can be used to identify different species.

The samples taken from the children’s skin helped identify the composition of the bacterial community, i.e. the metagenome. The relative amount of more than 30 bacterial genera increased on the children’s skin. The increase in the amount of immune-boosting gammaproteobacteria was connected to a change in interleukin-17A, which is associated with the development of allergies and immune-mediated diseases.

“Efficient sequencing methods and the data they generate are vital for studying microbial diversity and its impacts. Cultivation methods alone aren’t enough for studying things like this,” Grönroos says.

Next-generation sequencing technology enables the simultaneous sequencing of millions or even billions of DNA segments in a sample.

Read more here:

Just as those in the gut, the microorganisms in the skin play an important role in improving the body’s immune system. Mira Grönroos, community ecologist at the University of Helsinki, is studying the connections between the environment, microbiota and human health. She is interested in how spending time in the forest and connecting with nature affect the skin microbiota. Her aim is to find ways to improve the human immune system. This topic has not been studied much before.

Allergologist Tari Haahtela has made a special biodiversity hypothesis about human health: without exposure to nature and its microbes, our immune system dos not function as it should. If there is little or no interaction with nature, the immune system can’t learn to distinguish between what is dangerous and what is not. The body goes into a state of stress, which results in low-grade inflammation. The immune system overreacting may lead to diseases.

Mira Grönroos is working as a postdoctoral researcher in a multidisciplinary NATUREWELL project (2019–2025) funded by the Research Council of Finland. The project, led by Docent Riikka Puhakka, aims to study the impact of outdoor recreation on the health and well-being of Finnish youth. In the project, Grönroos focuses on studying how outdoor recreation and activities affect the human microbiota.

“The youth participated in a variety of outdoor activities in nature. Microbial samples were taken from their skin before and after the activities. We are studying whether hiking in a forest or spending time in urban nature changes the microbiota of the youth. We are also looking for ways to encourage young people to go out in nature,” Grönroos explains.

Grönroos is part of a research group led by Aki Sinkkonen, who works as a senior researcher at the Natural Resources Institute Finland (Luke). For the other studies in the group, researchers have been measuring interleukin and T-cell levels, for example. Cytokines, which are small proteins, function as messengers in the system controlling cellular functions in the body. These include interleukins, which increase or decrease inflammation. T cells help destroy pathogens living inside cells. B cells, on the other hand, are responsible for antibody-mediated immunity. The studies found that the levels of anti-inflammatory interleukin-10 proteins increased after microbial exposure.

According to Grönroos, the immune system and microbes are in constant interaction.

“The results so far are very encouraging, and now we’re studying the intensity of the natural exposure required. Spending time in nature also has many other wellbeing benefits that make the forest a good place to be. One way to increase microbial contact while enjoying nature is to eat snacks without washing or sanitising hands first,” says Grönroos.

Sinkkonen’s research group has been carrying out intervention studies, where the researchers’ intervention in the phenomenon under study is an integral part of the method. One study increased the interaction between daycare children and natural microbiota. The study followed daycare children between the ages of three and five in ten daycare centres in Lahti and Tampere for a month.

“The yard of the daycare centre was made greener to increase the children’s contact with natural materials. In another study, material containing microbiota was added to the sand in the yard,” Sinkkonen says.

The studies showed, for the first time in the world, that the children’s immune system regulation changed while they were in contact with the diverse microbiota of natural materials.

The microbiota collected from sand, skin and gut was sequenced. The study examined how the microbiota changed between the test group and control group. In the study, the gene region of 16S ribosomal RNA (16S rRNA) was sequenced and the bioinformatics was carried out with the resources of Finnish ELIXIR Node, CSC – IT Center for Science. The 16S gene regions have remained unchanged for millions of years of bacterial evolution, which is why they can be used to identify different species.